Tooba Siddiqui

Wed Apr 22 2026 • Updated Wed Apr 22 2026

13 mins Read

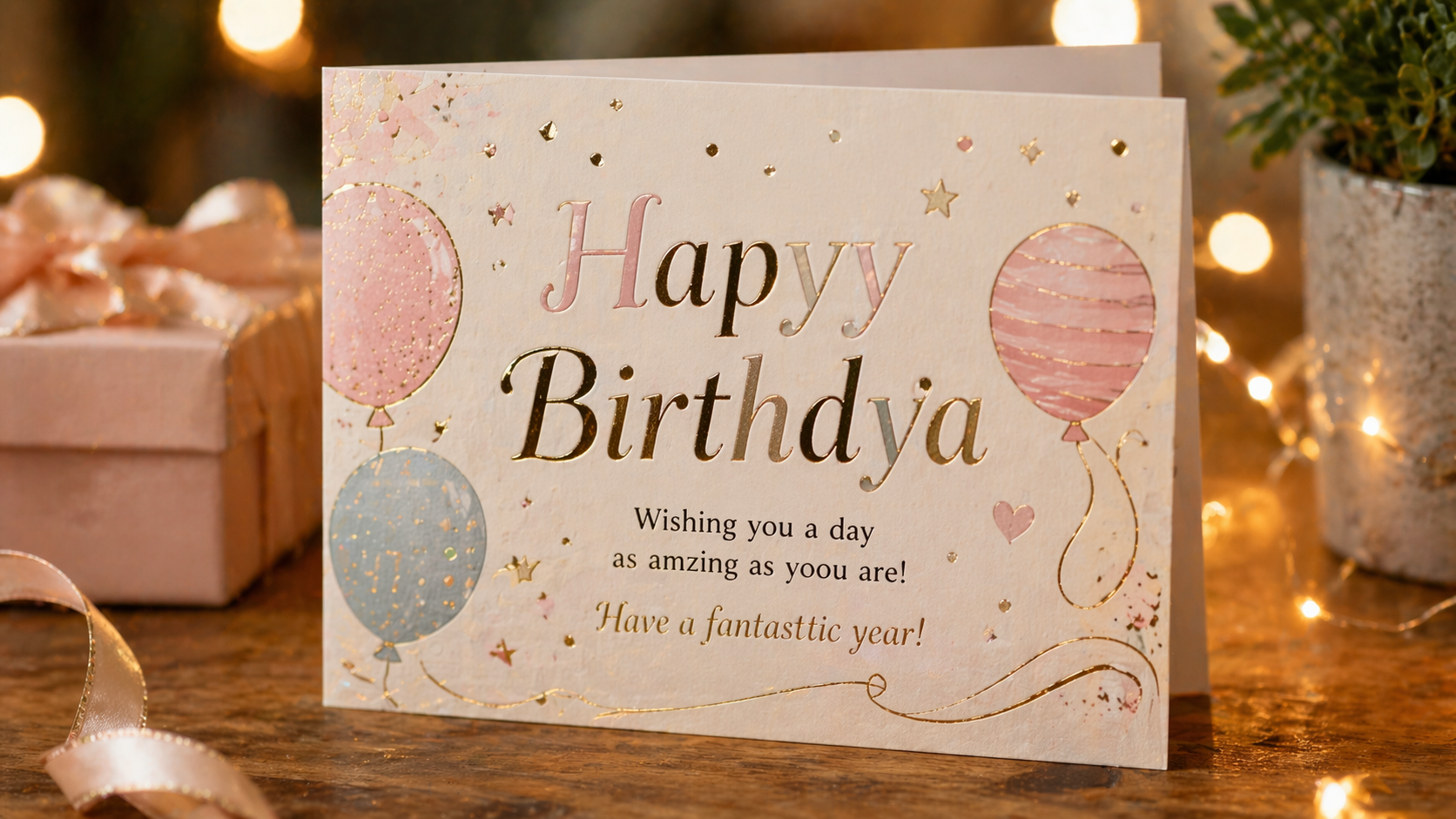

You asked an AI image generator to create a birthday card. Simple enough, right? The image comes back looking gorgeous — warm lighting, beautiful design, perfect composition. Then you look at the text. "Happy Brithday." Or maybe "Hapyy Birthdya." Or a string of symbols that vaguely resembles the alphabet after a long night out.

If you have spent any time with AI image generators, you have run into this wall — and understanding why AI image generators struggle with text reveals something important about how these models work in general, and where things are finally starting to improve.

AI image generators generate what text looks like, not what text says. They are pattern-recognition machines, not spelling engines. Everything else follows from that.

The Core Problem: AI Image Models Are Not Built for Language

AI image generators struggle with text because they are trained on visual patterns, not language rules — they approximate what text looks like rather than rendering what text actually says. An AI image model and a language model are fundamentally different machines doing fundamentally different things, and that difference is the root of every garbled word you have ever seen.

Elegant birthday card with obvious spelling errors

Elegant birthday card with obvious spelling errors

A language model, like the kind that powers chatbots, is trained to understand and produce sequences of characters. It knows that "H" comes before "e" in "Hello." It understands grammar, spelling, and structure at a character level.

An AI image generator, particularly one built on diffusion model architecture, does not work that way at all. It learns from billions of image-pixel pairs. It learns that certain shapes, textures, colors, and compositions tend to appear together. It gets extremely good at predicting what a visually coherent image looks like. But it does not read. It sees.

When you prompt an AI image generator to include the word "SALE" on a storefront sign, the model is not spelling out S-A-L-E. It is searching its learned visual patterns for something that looks like text on a sign. It is rendering an approximation of what text looks like, not what text actually is.

This is the root of every garbled word, backward letter, and nonsensical string you have ever seen in AI-generated text. For a deeper look at how text rendering AI systems handle this problem technically, understanding the underlying architecture first makes everything else clearer.

Why Do AI Image Generators Struggle with Text Specifically?

Several compounding factors make AI text rendering one of the hardest unsolved problems in image generation: training data that never enforced character accuracy, architectures built around visual probability rather than linguistic rules, and enormous variation in how text looks across fonts, styles, and languages.

Training data does not enforce character-level accuracy. When datasets are assembled for training image models, images are labeled broadly. A photo of a coffee shop might be tagged with "cafe," "interior," "morning," "warm lighting." Nobody is annotating whether the chalkboard in the background correctly spells "Espresso." The model never learns to care about accurate letter-by-letter rendering because the training process never asked it to.

The model learns visual probability, not linguistic rules. AI image generators are built to maximize visual coherence and aesthetic quality. They learn that text-shaped objects appear in certain contexts — menus, signs, labels, packaging. But the specific characters within that text are just another texture to approximate. The model produces whatever letter-like shapes feel visually plausible in context, not whatever letters are actually correct.

Fonts, orientations, and styles create enormous variation. The word "Open" written in a handwritten script, a bold serif font, a neon sign style, and an engraved plaque all look radically different visually while carrying the same meaning. For a model learning visual patterns, these are almost entirely different objects. Generalizing across that variation to produce accurate text in AI images in any style is an immense challenge.

The Tokenization Gap

There is a specific technical bottleneck worth understanding. Diffusion models process images by breaking them into patches — small visual tiles, typically 8x8 or 16x16 pixels, that the model reconstructs during generation. These patches contain visual information but have no concept of individual characters.

When you type "write the word OPEN on the door" in your prompt, the language encoder tokenizes that prompt and converts it into an embedding. But that embedding does not map cleanly to individual rendered glyphs in the output. The model knows text should be present. It does not know how to place exact characters with spatial precision because the architecture never required that kind of character-level control.

Why Curved, Stylized, or Handwritten Text Is Worse

Straight, clean, block-letter text in a standard font is already difficult. Add curves, stylization, distortion, or a handwritten look, and accuracy drops sharply. More visual complexity means more visual noise for the model to navigate. The gap between "visually plausible text" and "accurately spelled text" grows wider the more decorative the style becomes.

This problem also scales with language. Models trained predominantly on English-language data perform better on English text than on other scripts. Non-Latin writing systems like Arabic, Chinese, Devanagari, or Cyrillic are often severely distorted or rendered as decorative nonsense.

Common AI Text Rendering Failures You Have Probably Seen

The most common AI typography issues fall into six predictable categories, each rooted in the same underlying cause: the model approximates what text looks like rather than rendering what text says.

- Scrambled or misspelled words are the most common failure. Letters appear out of order, swapped, or replaced entirely. "Welcome" becomes "Welcoe" or "Weclome" or something that shares maybe three letters with the original.

- Extra or missing characters show up constantly. A five-letter word might come out as seven letters, or three. The model fills in or drops characters without any awareness that something is wrong.

- Repeated syllables or nonsense strings happen when the model leans hard into its "text texture" pattern without even attempting to render real words. You get strings like "SALLE SALLE SA" instead of "SALE."

- Inconsistent font style within a single word is a subtle failure. Different letters in the same word may render in slightly different fonts, weights, or sizes, because the model is constructing the word letter-by-letter approximation rather than as a unified typographic unit.

- Numbers and special characters are especially unreliable. Digits, punctuation, currency symbols, and mathematical notation have far fewer examples in training data and tend to come out heavily distorted or substituted with similar-looking shapes.

- Non-Latin scripts render as decorative gibberish. Arabic, Chinese, Devanagari, and Cyrillic text are frequently unrecognizable, reflecting the severe underrepresentation of non-English scripts in most training datasets.

Does the Language of Your Prompt Matter?

The language you use to write your prompt affects how well the model interprets and renders text in AI images.

English-language prompts generally yield better results across most major AI image generators. This comes down to training data bias. The vast majority of labeled image datasets are in English, and English-language content dominates the internet data used to train these models. The model has seen far more examples of English text in images than text in any other language.

For users working with non-Latin scripts, this creates a compounded challenge. Not only is text rendering already unreliable, but the model may have very few reference examples for how that script looks in context. The result is often decorative gibberish that bears no resemblance to the intended characters.

Even within Latin-script languages, quality varies. French, Spanish, German, and Portuguese prompts tend to perform reasonably well. Languages with less representation in training data see steeper quality drops. Understanding how language choice affects AI model performance can help you get more consistent results in your workflow.

What's Being Done to Fix AI Text Rendering?

This is one of the most actively researched problems in AI image generation right now, and progress is real. Two architectural approaches are leading the improvement: hybrid models that maintain tighter coupling between the language layer and the image generation layer throughout the process, and training datasets that specifically annotate text regions with character-level accuracy rather than broad image labels.

Seven models have made the most significant improvements in text rendering accuracy as of 2025–2026. I tested these seven AI image generators for text rendering using one prompt on ImagineArt AI image generator:

A vintage café storefront at night with a large glowing neon sign that reads 'THE GOLDEN CUP', a smaller chalkboard below it that says 'Est. 1987', and a tiny window sticker that reads 'Open Daily' — cinematic lighting, high detail, photorealistic.

1. ImagineArt 2.0

ImagineArt 2.0 brings sharper, more reliable text generation to creative workflows, representing a meaningful upgrade in how text is handled within the broader AI image generator ecosystem. Combined with the platform's AI workflow tools, it gives creators a more complete pipeline for handling text-heavy image projects from prompt to final output. For teams and organizations with serious production needs, AI Enterprise solutions offer additional control and consistency at scale.

All three text elements are in different font styles, sizes, and colors with perfect legibility.

All three text elements are in different font styles, sizes, and colors with perfect legibility.

2. Ideogram AI

Ideogram AI has built a strong reputation for typographic accuracy, specifically prioritizing legible, correctly spelled text as a core feature rather than an afterthought. One of the studies conducted in 2025 found Ideogram as one of the best AI image generator for readability and legible visual designs.

The AI text rendering is perfect with correct spelling and readability. However, it didn’t quite understand the ‘vintage’ vibes.

The AI text rendering is perfect with correct spelling and readability. However, it didn’t quite understand the ‘vintage’ vibes.

3. Recraft v4

Recraft V4 targets design-grade text rendering, making it particularly useful for branding, marketing, and graphic design workflows where text accuracy is non-negotiable.

Recraft v4 is great forr stylized typography and nails the cafe name placement. However, the ‘Open Daily’ gives it the AI-look.

Recraft v4 is great forr stylized typography and nails the cafe name placement. However, the ‘Open Daily’ gives it the AI-look.

4. Nano Banana Pro

Nano Banana Pro by Google DeepMind brings research-grade architecture to the text rendering problem, leveraging DeepMind's deep technical resources to tackle character-level accuracy in ways that consumer-grade models have not fully addressed.

The vibes, the correct spellings, the text placement — Nano Banana Pro checks all the boxes.

The vibes, the correct spellings, the text placement — Nano Banana Pro checks all the boxes.

5. GPT Image 2

GPT Image 2 from OpenAI represents a significant jump in text coherence, benefiting from tighter integration between OpenAI's language modeling expertise and its image generation capabilities. The model is yet to be released but A/B testing showed strong prompt understanding and small text rendering.

GPT image 2 renders small text very accurately

GPT image 2 renders small text very accurately

6. Flux.2

FLUX.2 advances text fidelity through improved latent representations, giving the model a more precise internal language for rendering accurate characters across diverse styles and contexts.

The text is grammatically correct, legible, and well-placed

The text is grammatically correct, legible, and well-placed

7. Seedream 5.0

Seedream 5.0 by ByteDance brings production-level text rendering precision to commercial creative workflows. Where earlier models produced decorative approximations of typography, Seedream 5.0 renders legible, correctly spelled text on signs, labels, packaging, and UI elements with near-accurate character fidelity. Its advanced typography engine understands design hierarchy and negative space — making it particularly strong for poster design, product packaging, and marketing materials where both visual quality and text accuracy are non-negotiable.

The text is grammatically correct and completely legible. But, the AI image model couldn’t achieve the required photorealism look

The text is grammatically correct and completely legible. But, the AI image model couldn’t achieve the required photorealism look

The Role of the AI Image Editor in Fixing Text

Even with improving generation quality, post-generation correction remains a practical and important part of the workflow. An AI image editor lets you go back into a generated image and fix problem areas without regenerating from scratch.

Inpainting — selecting a specific region of an image and regenerating just that area — is especially useful for text correction. You can isolate the text region, re-prompt with the exact characters you need, and integrate the corrected version back into the original image. Layer-based editing workflows take this further, giving you precise control over exactly where and how text appears in the final image.

Practical Tips: How to Get Better Text in AI Images Right Now

Prompt engineering for text is the most immediately actionable skill you can develop while generation technology catches up. These six practices improve results today:

- Keep text short and simple. Single words or very short phrases render more reliably than full sentences. The longer the text string, the more opportunities for errors to compound.

- Put your target text in quotation marks. Many models respond better when the exact desired text is explicitly quoted. Instead of "a sign saying welcome," try "a sign with the text 'Welcome' in bold letters."

- Specify font style clearly. Ambiguity about the visual style of text gives the model more room to interpret freely and go wrong. "Clean sans-serif font" or "bold block letters" constrain the output in helpful ways.

- Generate multiple variations and select the best. Text rendering has a significant random component. Running five to ten generations of the same prompt and picking the most accurate result is faster than trying to iterate toward perfection on a single generation.

- Use an AI image editor to correct after generation. Do not try to get everything right in the generation step. Generate the best overall composition you can, then use inpainting or layered editing to fix text accuracy in post.

- Use tools specifically designed for text-aware generation. Models that have prioritized text rendering as a feature will consistently outperform general-purpose generators on text-heavy tasks. Ideogram AI, Recraft V4, and ImagineArt 2.0 are purpose-built for this.

Ready to Render Text Accurately with ImaginArt?

AI image generators have come an extraordinary distance in just a few years. The quality, creativity, and photorealism available today would have been unimaginable not long ago. But AI image text accuracy has remained a genuine weak point, rooted in the fundamental architecture of how diffusion models learn and generate images.

The problem is well understood, actively worked on, and already showing real improvement. Models like ImagineArt 2.0 are making readable text in AI images more reliable with each generation, and the combination of smarter generation with capable post-editing tools means you do not have to wait for perfection to produce great work.

Use the AI image generator to get the best composition you can, then reach for the AI image editor to polish the details. The workflow exists today. The full solution is on its way.

Frequently Asked Questions

AI image generators are trained to learn visual patterns, not language. They see text as a visual texture rather than a sequence of meaningful characters. Without character-level understanding built into the architecture, the model approximates what text looks like rather than rendering it accurately — which results in misspellings, scrambled letters, and inconsistent characters.

Most AI image generation systems were not originally designed with text accuracy as a core objective. Training datasets rarely annotate individual characters in images, so the model never develops a precise internal model of correct spelling or typography. Newer architectures are addressing this directly by building tighter connections between the language layer and the image generation layer, but it remains a known limitation across diffusion-based models.

Training data bias is the primary cause. The vast majority of labeled image datasets used to train large diffusion models contain English-language content. Models have seen significantly fewer examples of Arabic, Chinese, Devanagari, Cyrillic, and other non-Latin scripts in context, which means the model has weaker visual references for those characters. The result is often decorative distortion rather than recognizable text.

ImagineArt 2.0 is one of the best AI image generators at rendering text, along with Ideogram AI, Recraft V4, Nano Banana Pro by Google DeepMind, GPT Image 2, FLUX.2, and Seedream 5.0. Results still vary by prompt complexity, font style, and text length, but these models treat text accuracy as a design priority rather than an incidental feature.

Yes. Using an AI image editor with inpainting capabilities, you can select the text region in a generated image and regenerate just that area with a corrected prompt. This is often the most reliable method for achieving accurate text in AI-generated images, especially for anything longer than a single word or short phrase.

Yes, and improvement is already happening rapidly. The combination of text-annotated training datasets and hybrid architectures that maintain tighter language-image coupling throughout generation is producing measurably better results. Models like ImagineArt 2.0 and GPT Image 2 represent the current frontier, and the gap between generation quality and text accuracy is narrowing with each model generation.

Tooba Siddiqui

Tooba Siddiqui is a content marketer with a strong focus on AI trends and product innovation. She explores generative AI with a keen eye. At ImagineArt, she develops marketing content that translates cutting-edge innovation into engaging, search-driven narratives for the right audience.