Syed Anas Hussain

Fri Apr 17 2026 • Updated Mon Apr 20 2026

9 mins Read

Most teams are testing 3 to 5 ad creatives a month. The teams winning on Meta, TikTok, and YouTube are testing 20 to 50. The gap is not strategy, budget, or talent. It is the speed they achieve using AI. So, how do you use AI to test ad creative variations? Let us find out.

How to Use AI to Test Ad Creative Variations

You use AI to test ad creative variations by generating multiple versions of your hook, visual format, message angle, and CTA at speed, then running structured tests to identify which combination drives the best performance and feeding those results back into your next round of generation.

But if you really want to understand how to use AI to test ad creative variations — what to test, how to structure your tests, and how to build a system that compounds over time — this article is for you.

What Is Ad Creative Variation Testing?

Creative variation testing is not running two ads and picking the winner. It is a repeatable system that tells you what is working, why it is working, and what to build next.

There are three types of tests worth knowing:

- A/B testing compares two versions with one variable changed — same audience, same budget, one difference. The simplest and most reliable method for isolating what drives performance.

- Multivariate testing changes multiple elements simultaneously to find the best combination. Requires significantly more traffic and budget to produce reliable results. Best used at scale.

- Concept testing compares entirely different creative angles — problem-led vs benefit-led, UGC-style vs polished production, emotional vs rational messaging. Higher risk, higher reward. Always worth running at the start of a new campaign.

Most teams start with A/B testing and concept testing. Multivariate comes later, once you have enough data and traffic to support it.

What to Test: The 4 Creative Variables That Move the Needle

Not everything is worth testing. These four variables produce the most consistent, actionable learnings.

1. The Hook

The hook is the first three seconds of a video or the opening visual of a static ad. It is the single highest-leverage variable in most ad accounts.

If your hook does not stop the scroll, nothing else matters. The body copy, the offer, the CTA — none of it gets seen. On Meta, testing the hook while keeping everything else constant often produces double-digit CTR improvements. Aim for a hook rate above 30% on video ads. If it is lower, the opening frame is the problem.

Test hooks that are genuinely different from each other. "Save money on software" versus "Reduce software costs" are not two hooks — they are the same idea reworded. A real hook test looks like: problem-first opening versus product demo opening versus bold statistic opening. Three different approaches, not three different phrasings.

2. The Visual Format

Before you test anything inside a creative, test the format itself. Static image, short-form video, and UGC-style content are not interchangeable. Different audiences respond to different formats, and the algorithm treats them as distinct creative types.

A polished product video and a creator-style talking-head clip can promote the same offer and produce completely different results with the same audience. You cannot know which works until you test both. Format testing is concept-level — run it early, before you spend budget optimizing the wrong creative type.

3. The Message Angle

The message angle is the strategic premise of the ad. Are you leading with a problem the audience has? A benefit the product delivers? Social proof from someone who has already used it? A bold claim? An emotional story?

Changing the message angle is the most disruptive test you can run — and the most valuable. A winning angle can outperform a losing one by 3x or more on conversion rate. Test angles early in a campaign, before you invest in polishing a single direction.

Run 3 to 5 distinct angles against each other. Once a clear winner emerges, then start iterating inside it.

4. The CTA

Test the CTA last. After the hook, format, and angle are validated, CTA variations typically produce 5 to 15% performance differences — meaningful, but not where you want to start.

What to test: the action word ("Shop Now" vs "Get Started" vs "Learn More"), the urgency level ("Limited offer" vs "Try it free"), and the placement. A CTA test on an unvalidated creative tells you nothing useful. Run it on a proven winner.

Why Manual Creative Testing Does Not Scale

Here is the math problem manual testing creates.

Finding a winning creative requires testing across multiple hooks, formats, angles, and CTAs. A basic test matrix — 5 hooks, 3 formats, 3 angles — already gives you 45 combinations. Manual production means a designer for every visual, a brief for every variation, and a file export and platform upload for each one. Even with an efficient team, you are looking at days to produce what you need for one test cycle.

By the time your 10 variations are ready, the algorithm has already spent your budget learning on stale creative. The campaign window has closed. The audience has moved on.

AI changes the unit economics of creative testing. What used to take days takes minutes. What used to require a design team can now be executed by a single marketer. And critically, the volume advantage compounds. The more you test, the faster you learn. The faster you learn, the better your next campaign performs.

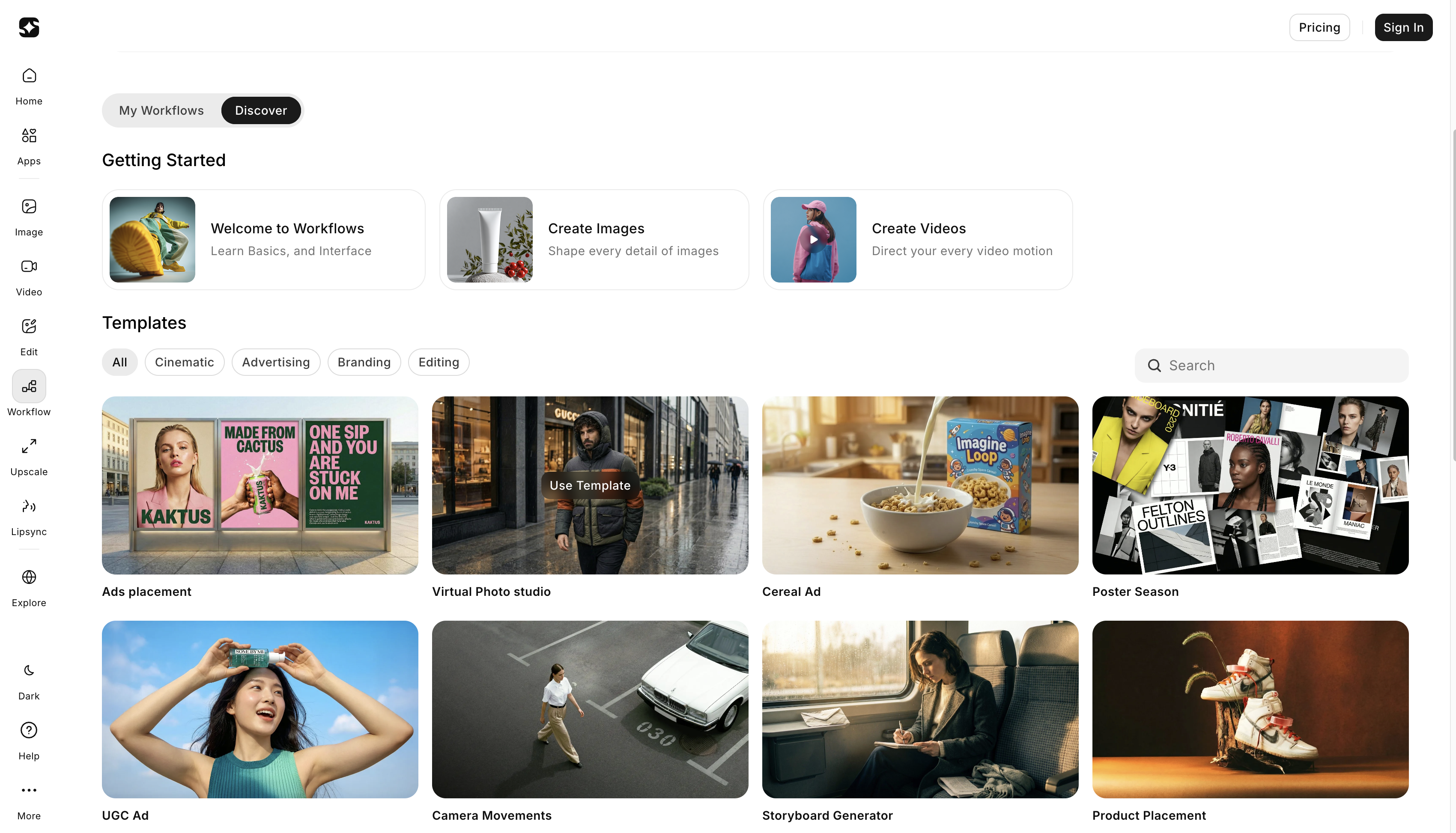

How to Use ImagineArt to Generate and Test Ad Creative Variations

This is where the system comes together. ImagineArt gives you the generation speed that makes high-volume creative testing possible across images, video, and multi-format campaigns — without a production team.

Generate Visual Variations at the Concept Stage

Use the ImagineArt AI Image Generator to produce multiple visual directions from a single brief. Different compositions, product angles, background treatments, and visual styles — generated in minutes, not days.

This means you can test concept-level differences — lifestyle imagery versus clean product shot versus bold text-led visual — before committing design resources to any single direction. You find out what resonates with real audience data, then invest in the direction that wins.

No designer briefing. No revision rounds. No waiting three days to find out your concept was wrong.

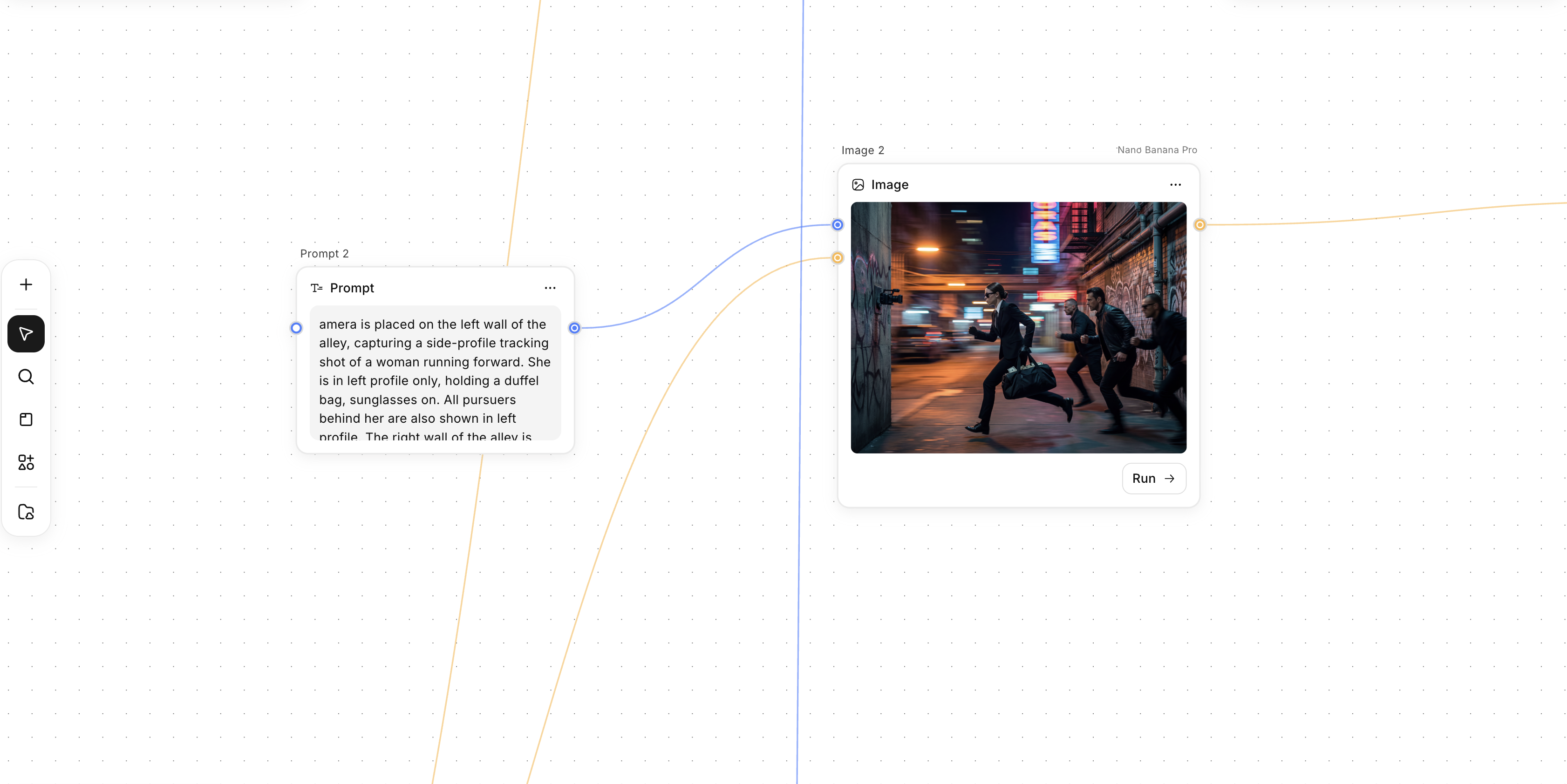

Generate Video Variations for Hook Testing

Hook testing is where video creative lives or dies — and it is also where manual production creates the biggest bottleneck. Briefing, shooting, editing, and exporting five different 3-second opening sequences can take a week. With the ImagineArt AI Video Generator, you generate them in minutes.

Test 5 different hook approaches simultaneously: problem-first, product demo, bold claim, social-proof opening, and curiosity-driven question. Same body content, same CTA, five different openings. The one with the highest hook rate becomes the foundation for everything you build next.

This is how the best-performing teams operate in 2026. They generate hooks fast, test them cheap, and scale the winner.

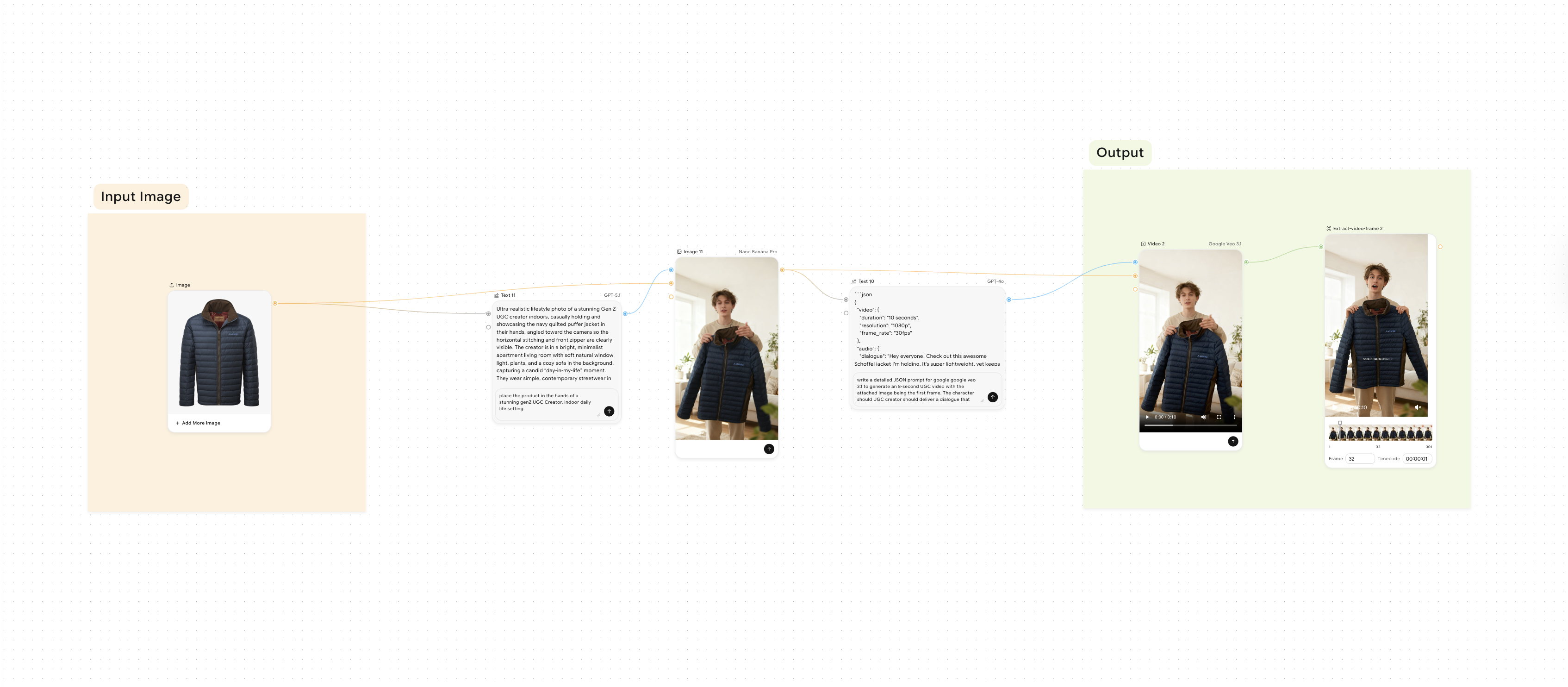

Build a Reusable Variation Pipeline with Workflows

For teams running multiple test cycles across multiple campaigns, building variations one at a time is still too slow. ImagineArt Workflows solves this with a node-based creative automation canvas.

Connect your input — a product image, a campaign brief, a winning creative — to multiple output branches. One input generates a static ad, a vertical video, a widescreen banner, and a UGC-style variation simultaneously. Build the pipeline once. Run it every test cycle. Every campaign.

This is not just faster production. It is a structural shift from creative testing as a series of one-off tasks to creative testing as a repeatable, automated system.

Recommended read: How to Build AI Workflows for Your Creative Team

How to Structure Your Tests So the Data Actually Tells You Something

Generating variations is only half the system. How you run the tests determines whether the data gives you insight or noise.

Test one variable at a time.

Changing the hook and the visual and the copy in the same test tells you which combination won — not what caused it to win. Isolate one variable per test. It takes longer to build knowledge this way, but the knowledge compounds. After five tests, you know what works and why.

Run tests long enough.

48 hours and 12 conversions is not a test. Wait at least 7 to 10 days, or until each variation has reached enough impressions for statistical confidence. Meta generally recommends 100 or more conversions per variation for high confidence. If your budget does not support that, use directional signals — but label them as directional, not conclusive.

Define your success metric before you launch.

If you are testing for awareness, hook rate and CTR are your primary metrics. If you are testing for conversion, CPA is what matters. Defining this before launch prevents the trap of cherry-picking the metric that makes your preferred variation look like the winner.

Document what won and why.

Running tests without recording results is testing without memory. Keep a winners library organized by creative component: winning hooks, winning angles, winning formats, winning CTAs — each with the performance data and the context that explains the result. After 10 test cycles, this library becomes your most valuable creative asset.

Refresh before fatigue hits.

Most ads fatigue within 2 to 4 weeks. High-growth brands rotate at least 20% of their active ads every 7 days — not because the ad stopped working, but because they are proactively testing the next round before it does. Reactive refresh means your CPA is already climbing before you act. Proactive refresh keeps performance stable.

Ready to Test Ad Creative Variations with AI?

Creative testing used to be limited by how fast your team could produce variations. With ImagineArt, that limit is gone. You generate at speed, test with structure, document what works, and build a system that gets smarter with every campaign.

The teams winning on paid media in 2026 are not the ones with the biggest creative budgets. They are the ones with the best creative testing systems. This is how you build one.

Recommended read: Best AI Content Repurposing Tools in 2026

Frequently Asked Questions

Ad creative variation testing is a structured process of producing and running multiple versions of an ad — changing one variable at a time such as the hook, visual format, message angle, or CTA — to identify which combination drives the best performance. It is not a one-off A/B test but a repeatable system that builds knowledge over time.

You use AI to test ad creative variations by generating multiple versions of your hook, visual, angle, and CTA at speed using a tool like ImagineArt, then running structured tests across those variations. AI removes the production bottleneck that previously limited how many variations a team could realistically test in a campaign window.

High-performing teams test 20 to 50 variations per month. A basic test matrix of 5 hooks, 3 formats, and 3 angles already produces 45 combinations. Start with concept-level differences — different hooks, formats, and angles — before iterating on smaller details like CTA wording.

The hook — the first 3 seconds of a video or the opening visual of a static ad — is the single highest-leverage variable. If the hook does not stop the scroll, no other element gets seen. Test hook angles first, before investing in message or CTA optimization.

Syed Anas Hussain

Syed Anas Hussain is a computer scientist blending technical knowledge with marketing expertise and a growing passion for AI innovation. Curious by nature, he dives into new AI sciences and emerging trends to produce thoughtful, research-led content. At ImagineArt, he helps audiences make sense of AI and unlock its value through clear, practical storytelling.