Ultimate Summer Deal

HappyHorse 1.0

HappyHorse 1.0

HappyHorse 1.0 lets you generate cinematic 1080p clips with synchronized audio from a text prompt or image. Type your scene, hit generate, and walk away with broadcast-ready video and real sound in one pass.

How to Generate Video Using HappyHorse

Add Your Prompt

Type a prompt describing your scene, characters, camera movement, and mood. HappyHorse 1.0 understands natural language and follows instructions closely. The more specific your prompt, the more cinematic your output.

Choose Your Video Setting

Select your preferred aspect ratio, including portrait and landscape orientations. Set the video resolution of up to 1080p. HappyHorse 1.0 supports video duration of up to 20 seconds.

Generate & Download

Click generate and HappyHorse 1.0 will render incredibly cinematic videos, with native audio generation, smooth motion, and visual consistency. You can even edit it directly in ImagineArt AI video editor, if needed.

Generate AI Video with Sound in One Pass

HappyHorse 1.0 has a unified Transformer architecture that generates video frames and audio together in a single pass, producing synchronized dialogue, ambient sound, and Foley effects without a separate pipeline. What comes out is a finished clip with sound already baked in, ready to publish. For creators who need to move fast, that alone makes it worth using.

Multilingual Video with Native Lip-Sync

On ImagineArt, HappyHorse 1.0 can handle seven languages natively, including English, Mandarin, Cantonese, Japanese, Korean, German, and French. It generates accurate lip movements alongside the video in the same generation pass. You set the language, describe your scene, and get back a clip where the mouth movements actually match the audio. No voice talent sourcing, no post-production dubbing, no extra tools.

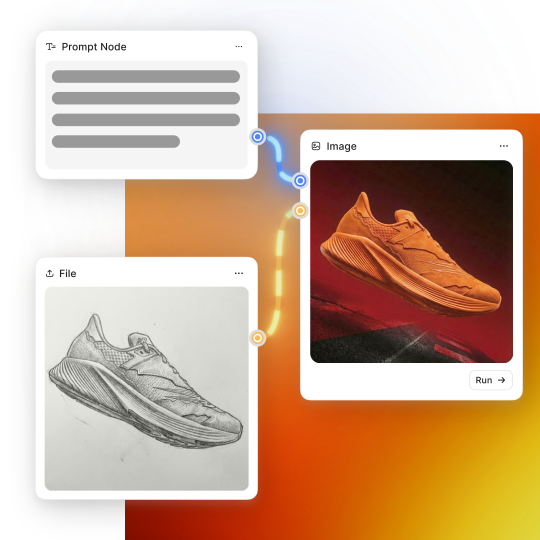

Multi-Modal Video Creation

HappyHorse 1.0 on ImagineArt supports both text to video and image to video with the same level of output quality. Feed it a detailed scene description and it executes camera directions, motion cues, and subject behavior with high fidelity. Upload a reference image, and it animates it with realistic physics while keeping the original subject intact. Both modes output native 1080p with synchronized audio.

Multi-Shot Storytelling

The AI video generation model is built specifically for multi-shot video generation - it holds character identity, wardrobe, visual style, and scene atmosphere across transitions from a single prompt. That makes it useful for product stories, brand narratives, social series, and concept proofs where the output needs to look like it was planned, not stitched together. Available on ImagineArt, it is one of the few AI video tools that treats a sequence as a single creative problem rather than a series of separate generations.

Trusted by Professionals and Creators from top Brands and Companies